U.S. Department of Transportation

Federal Highway Administration

1200 New Jersey Avenue, SE

Washington, DC 20590

202-366-4000

Federal Highway Administration Research and Technology

Coordinating, Developing, and Delivering Highway Transportation Innovations

| SUMMARY REPORT |

| This summary report is an archived publication and may contain dated technical, contact, and link information |

| Publication Number: FHWA-HRT-14-077 Date: July 2014 |

Publication Number: FHWA-HRT-14-077 Date: July 2014 |

Day One: Presentations

Expert presentations are summarized in the following section.

Driver–Driver and Other Road Users' Data for Human Factors Research

Dr. Marco Dozza

Chalmers University of Technology

Overview

Dr. Marco Dozza reminded workshop participants that safety is an ongoing concern as the complexity of the roadway environment continually increases. This complexity particularly jeopardizes cycling safety. Roadway space is commonly shared among cyclists and other road users, such as drivers, and the interaction between these different modes of transportation creates a high risk for crashes and potential injuries and fatalities.

In Europe, 1,994 cyclists were killed in 2010 and accounted for 6.8 percent of total road fatalities, compared with 2 percent of road fatalities in the United States.1,2 Improving cycling safety is therefore crucial, because cycling is increasingly becoming a more popular mode of transportation. In addition, with an integrated electric motor available for propulsion, electric bicycles (e-bikes) heighten this concern because of their high speed and increasing prevalence. A better understanding of how cyclists behave in traffic is therefore needed to develop improved safety measures. This could be achieved by transferring existing methods of naturalistic data collection for cars and trucks to collect naturalistic cycling data.

Dozza informed participants that the goal of this research is to understand how bicyclists, using traditional bicycles and e-bikes, behave in traffic and the extent to which safety–critical situations (i.e., crash and near crashes) are different for e-bikes compared with traditional bicycles. The researchers of this study collected and analyzed naturalistic cycling data and also acquired additional datasets from the Swedish Traffic Accident Data Acquisition (STRADA) database. Dozza informed workshop participants that, in Sweden, 70 percent of the bicycle crashes that occur are reported in accident databases. In accordance, cycling accidents within the STRADA database were isolated and combined with the former data to better address a number of issues. Dozza told workshop participants that this project is expected to provide the research and transportation industry with methods to gather naturalistic data, in particular naturalistic cycling data, to understand accident causation, to investigate cycling behavior, to inform regulations and infrastructure design, and to test intelligent systems.

Naturalistic Cycling Data

Naturalistic data collection refers to data collected in traffic by road users performing their usual daily activities. Traditionally, naturalistic data are recorded from instrumented cars and trucks. There are many reasons researchers are interested in collecting naturalistic data, summarized as follows:

Dozza suggested that the same reasons researchers collect naturalistic driving data can also be applied to bicycles. In addition, with the increase in cyclists, it is important for researchers to understand other road-user behavior. All road users have to contend with issues of distraction and obedience to road rules, in addition to adapting to the speed of the new e-bikes.

Dozza highlighted that gathering naturalistic cycling data will make it possible to improve current regulations and road infrastructure in Europe. In 2012, there were 1.2 million new e-bikes on the road; however, bike lanes in Europe may not fully accommodate e-bikes, and they may require new infrastructure.7 For example, in Sweden, pedestrians and cyclists frequently share the same sidewalk. With naturalistic cycling data, researchers can test intelligent systems—such as new smartphone applications—that promise to help cyclists and test if they display destructive behavior. Overall, the study findings will contribute to the development of countermeasures to reduce cyclist trauma.

Dozza informed participants that naturalistic cycling data collection requires a sophisticated network of sensor processing and recording systems. In accordance, equipment requirements for bicycles differ from those of cars, for example, weight and weather resistance requirements are more important for bicycles than they are for cars. In this study, the researchers recorded naturalistic data by using an instrumented traditional bicycle, which was fitted with the following equipment, as shown in figure 1:

© Marco Dozza

Figure 1. An instrumented traditional bicycle.

Dozza informed participants that the data gathered in this cycling study are fundamentally very similar to those gathered in driving studies. The objective data collected includes videos, positions, and kinematics (e.g., GPS and IMU), in addition to controls (e.g., brakes and pedals). Subjective data collected includes interviews, diaries, demographics, and cycling behavior questionnaires. Other types of data were derived, such as glance behavior and use of maps. Analyses were performed by using Matlab (a high-level language and interactive environment for numerical computation, visualization, and programming) and NatWare (a toolkit developed at the Vehicle and Traffic Safety Center at Chalmers).

Cycling Behavior

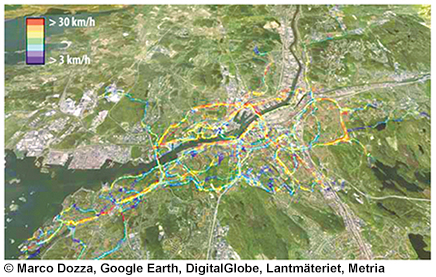

Figure 2 shows average speeds using the naturalistic cycling data collected. This map of downtown Gothenburg, Sweden, is a representation of cyclist usage of different types of roads. In Sweden, 30 km/hr (18.6 mi/h) is the maximum speed for bicycles before riders may be fined. The red areas on this map shows that traditional bicycles are driving illegally. It is expected that when the same map is produced with e-bikes, it will show even higher levels of excess speed.

Figure 2. Average cycling behavior speeds.

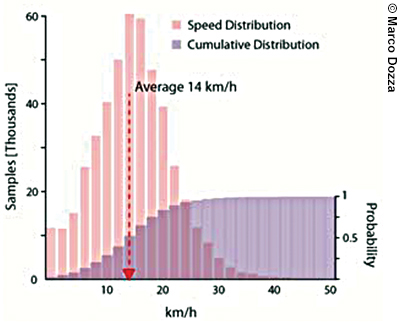

The researchers for this study went on to examine the speed profile of cyclists riding traditional bikes, as shown in figure 3. The average speed for cyclists was about 14 km/hr (8.6 mi/h).

The research team collected data for figure 3 in 2012 for 16 cyclists using traditional bikes. Currently, researchers are performing the same study for cyclists using e-bikes and, based on preliminary data, the average speed for e-bike users is expected to increase by almost 10 km/h (6.2 mi/h). This is important to note because some bike paths are shared with pedestrians in Sweden, and it is well known that increased speed increases the risk of an accident occurring.

Figure 3. Cyclists' speed profile.

The research team also used the naturalistic cycling data it gathered as observational data, which is considered one of the many benefits of gathering naturalistic data. The team examined cyclists' obedience to cycling rules and gathered information on gender, helmet use, crossing behavior, and proper light usage at night.

Accident Causation

The research team performed an event-based safety analysis for its study. The team examined 63 critical events (both crash and near crash) by using the button presses from the cyclists, who were instructed to press the button any time they experienced a safety or uncomfortable situation. These 63 events were complemented by 126 baseline events chosen at random. The team annotated factors related to the environment and road users' behavior for all events. The team also calculated odds ratios by looking at the difference between critical and baseline events in the prevalence of different factors. For this critical events' analysis, the team developed a map pinpointing where the baseline and critical events occurred around Gothenburg.

The team found that daylight was not a risk factor between baseline and critical events; however, the analysis indicated that cyclists are 10 times more likely to get into trouble when there are surface issues (e.g., holes) and are at even greater risk in proximity of intersections with reduced visibility. The team learned that risk also increased when there were other pedestrians and bicyclists on a potential collision path with the cyclist participating in the study.

Because the team only had six crashes to work with, it used near crashes in its analysis as well (safety–critical situations from the button presses); however, the potential issue is whether one can assume that near crashes are predictive of crashes. To test this assumption, the team will combine its data with bicycle accident data from STRADA. For this analysis, the team will also account for exposure into account and the number of single-bicycle accidents during each hour of the day. The main purpose of this analysis is to show researchers that they can combine different data to address questions more effectively and that safety–critical situations are a sound surrogate for crashes.

Future Trends: Cooperative Systems and Wireless Communication

Dozza told participants that there is ongoing research focusing on wireless communication methods for bicycles. In particular, applications are being developed for smartphones that address safety for bicyclists. One example is BikeCOM, a cooperative application that assists drivers and cyclists at intersections. This application, developed by a student at Chalmers University, communicates with a bicycle and a car approaching an intersection by transmitting the positions of the two, calculating the estimated time to collision, and transmitting a warning to the bicyclist and the driver in the form of an audible alert. The application performs a threat assessment and warns both the bicyclist and driver, depending on the probability of collision. Although this application was originally designed for use by bicyclists, other road users, such as drivers and pedestrians, could also use it. This application is in the developmental stage and has been mainly used as a proof of concept for cooperative systems that address multiple road users, including cyclists.

In addition, Safety Pilot is a USDOT field operational test (FOT) of vehicle-to-vehicle (V2V) communication. Dozza told participants that the test is currently gathering data from 2,843 vehicles and from one instrumented bicycle from Sweden to evaluate traffic patterns and behavior.

Lessons Learned and Future Directions

In summary, Dozza noted that future research can benefit from the use of naturalistic data. For example, with naturalistic data, one is able to combine video data with other data types to address accident causation, road-user behavior (including obedience to traffic rules and distraction), infrastructure design, and intelligent applications. In addition, there are important research questions that can be better answered by combining naturalistic data with other road data from accident databases. Dozza also noted that naturalistic datasets can be reused, and told workshop participants that the next step for this research is to compare behavior across electric and non-electric two-wheelers. Existing tools and methods from the naturalistic driving study and FOT analyses will be reused, and the new data will be integrated. Ultimately, wireless communication among road users will complement naturalistic data, providing new information about road users and their surroundings.

Discussion

After the presentation, the group discussed various topics, including the following:

Additional Resources

Dozza made available to participants a selection of additional resources. These resources are outlined below.

Videos

Papers

Driver and Pedestrian Recognition Behavior for Avoiding Conflict with Each Other at an Intersection

Dr. Toru Hagiwara

Hokkaido University, Japan

Dr. Hidekatsu Hamaoka

Akita University, Japan

Overview

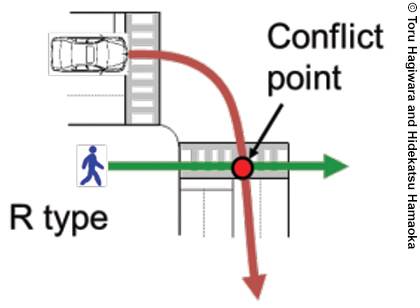

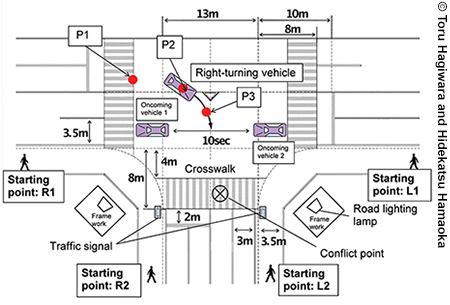

Dr. Toru Hagiwara and Dr. Hidekatsu Hamaoka informed participants that there are many fatal accidents involving pedestrians in crosswalks and right-turning vehicles every year in Japan, where vehicles travel on the left side of the road. For example, in 2012 there were 4,411 motor-vehicle–related fatalities, and more than 1,500 of these were pedestrian fatalities.8 Pedestrian accidents occur mainly at intersections and are frequently known as R-type accidents. An R-type accident occurs when a pedestrian approaches to cross the crosswalk from the same direction as a right-turning vehicle, as shown in figure 4. A driver's inability to detect pedestrians as they cross is one of the main reasons why these types of accidents occur. For this reason, drivers need help to become more aware of pedestrians in the crosswalk.

Figure 4. An R-type accident.

This presentation focused on three studies that investigated driver and pedestrian recognition behavior as a basis for developing ways to avoid conflict. These studies focused on the following:

Driver Recognition Behavior

For the first study, the research team looked at how drivers recognized pedestrians in intersections. The team assessed driver behavior for avoidance of conflict with pedestrians who approached from the right and how the driver predicted the pedestrian's rate of crossing the intersection. The team conducted field experiments to measure the time it takes drivers to recognize a pedestrian and also measured drivers' avoidance behavior under various conflict conditions as a function of the pedestrian's visibility. To measure the driver's avoidance behavior, the team varied the interval between the time at which the pedestrian passed the conflict point, or the point of impact, and the time at which the right-turning vehicle passed the conflict point.

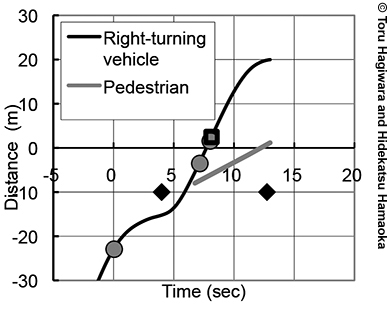

The team developed a time–space diagram and estimation for four types of driver-avoidance behavior. One of these avoidance behaviors is front passing, which is when the right-turning vehicle passes through the conflict point in front of the pedestrian, as shown in figure 5. Other behaviors include stopping, which is when the right-turning vehicle stops before the conflict point to avoid hitting the pedestrian; avoidance, which is when the driver brakes and slows to yield to the pedestrian after starting to turn right; and passing behind, which is when the right-turning vehicle passes through the conflict point after the pedestrian without braking and slowing.

Figure 5. Front-passing diagram.

The team used the following formulas to calculate the predicted time lag (PTL) and observed time lag (OTL):

PTL= Time 1 - (Time 2 + running time) (1)

OTL= Time 1 - Time 3 (2)

Time 1 is the time when the pedestrian passes through the conflict point, Time 2 is the time when the first oncoming vehicle passes through the conflict point, and Time 3 refers to the time when the right-turning vehicle passes through the conflict point. The running time from the start to the passage through the conflict point is 5.02 sec, if the driver does not perform any avoidance behavior. The team performed 315 runs in the field. Results showed that:

Overall, the authors of this study of drivers' pedestrian-recognition behavior found that the driver's choice of avoidance behavior correlated with the PTL to hit the pedestrian. The minimum PTL at which drivers will yield to the pedestrian at the conflict point was approximately 2 sec. In addition, drivers tended to choose the avoidance behavior of passing behind the pedestrian when the drivers focused on the pedestrians before starting the right turn.

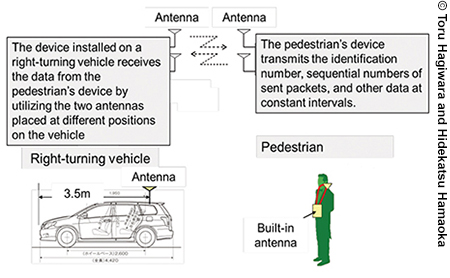

Performance of a PV-DSRC System in which DSRC Transmits Data to Drivers and Pedestrians at Intersections

The researchers of this second study assessed the data transmission capability of a PV-DSRC system for situations with right-turning vehicles and pedestrians at intersections. The goal was to measure the performance of the PV-DSRC data transmission between the right-turning vehicle and the pedestrian under dynamic conditions at the experimental intersections. The researchers also evaluated the capability of a PV-DSRC data transmission system at actual intersections. To evaluate this system, the researchers reproduced the potential for collision conflicts between right-turning vehicles and pedestrians by using a test track in Tomakomai City, Japan, as shown in figure 6. For each run, the pedestrian starts from one of the four starting points (R1, R2, L1, L2) shown in figure 6 and then crosses the intersection. The starting point is expected to affect the data transmission performance because of the positional relationship between the pedestrian and the DSRC device.

Figure 6. Reproduced collision conflict between right-turning vehicle and pedestrian.

The PV-DSRC system used in these experiments is the same intervehicle DSRC (IV-DSRC) system that met the experimental guidelines for IV-DSRC systems that use the 5.8 GHz band (ITS FORUM RC-005 ver 1.0). Figure 7 shows how the pedestrian communicates with the vehicle. The researchers followed up by conducting a field experiment at three intersections in Yokosuka City, Japan, to evaluate the performance of data transmission between the pedestrian and a right-turning vehicle in a real-world setting. The purpose of this study was to assess the influence of intersection size, location of the right-turning vehicles in the intersection, and the presence of an oncoming vehicle and a leading right-turning vehicle on data transmission performance. The experiment was performed by using multiple passes through the intersections at three different sized intersections: small, medium, and large. The receiving power at the large intersection showed that when the distance was between 50 m (164 ft) and -50 m (-164 ft), values of received power far exceeded the required level, but the received power values tended to be lower when there was an oncoming vehicle. Results of the packet-arrival rate, or throughput, show that they achieved the needed 80-percent packet-arrival rate required in Advanced Safety Vehicle-3 technology. When the right-turning vehicle is between 100 m (328 ft) and 30 m (98 ft) distance, the packet-arrival rates exceeded the 80-percent threshold.

Figure 7. Pedestrian–vehicle dedicated short-range communications system.

Overall, the study indicated that the data transmission capabilities of a PV-DSRC system between right-turning vehicles and pedestrians at intersections were effective. If equipped with the IV-DSRC system, right-turning vehicles could communicate with pedestrians in crosswalks who cannot be detected by the drivers or local sensors alone. Simultaneously, pedestrians could be alerted to their associated risk. Ultimately, the DSRC data transmission system could provide effective support to drivers who do not notice their risk of colliding with pedestrians both before and while making a right turn.

Behavior of Pedestrians in Crosswalks and Recognition of Approaching Turning Vehicle

The researchers conducted the third field experiment to understand the crossing behavior of pedestrians in the crosswalk. The researchers investigated how pedestrians identified the approach of right- or left-turning vehicles while crossing the crosswalk. They analyzed the head-turning behavior of pedestrians for right- or left-turning vehicles and considered the limitations of what a pedestrian can see and hear. The purpose of this experiment was to identify the point where pedestrians can confirm an approaching vehicle. The researchers achieved this by comparing the head-turning behavior of the subjects to assess whether they have an average confirmation or an appropriate confirmation.

Figure 8 shows the pedestrian-crossing experimental intersection scenario, which was implemented on a test track. Each pedestrian test subject was asked to proceed through the crosswalk as a vehicle makes a right or left turn toward the point. Only one vehicle can turn into the crosswalk. Each of the 44 subjects carried out this experimental procedure 16 times. The repetitions of the testing had the following variations:

Figure 8. Pedestrian crossing an experimental intersection.

By linking the head-turning angle with the location of intersection, the researchers showed characteristics of head-turning behavior. Subjects were both young and elderly, and some wore headphones. The tests were conducted during the day and at night. Subjects wore a hat with a head camera and a six-axis sensor to precisely measure head-turning angle (50 Hz, 0.001 deg/s unit).

This pedestrian study documented the importance of designing countermeasures for traffic accidents that involved a vehicle and a pedestrian from the viewpoint of the pedestrian. The field experiment analyzed head-turning behaviors of crossing pedestrians relative to their starting position and the approach of vehicles.

Summary and Lessons Learned

By conducting field experiments, the researchers were able to study driver recognition behavior, pedestrian recognition behavior, and the potential effectiveness of PV-DSRC systems. In doing so they were able to conclude the following:

Discussion

After the presentation, the presenters noted that the goal of the experiment was to test the most difficult intersection conditions, which is why an intersection without street lights was purposefully chosen. The presenters also noted that the pedestrians used a pair of headphones to block out noise and participants suggested that the researchers re-run the study with pedestrians listening to music with their headphones. One workshop participant observed that the interaction between pedestrian and vehicle maintains a constant speed dictated by the study parameters, and suggested that naturalistic data be gathered to capture that interaction. The presenters and participants noted that in the United States, there can exist blind spots for drivers turning into an intersection, especially when making a right turn.

Driver–Infrastructure and Roadway Data for Human Factors Research

Dr. Michael Manser

Center for Transportation Safety, Texas A&M Transportation Institute

Formerly with the University of Minnesota

Overview

Dr. Michael Manser examined strategies for data collection and analysis of the relationship between the driver and infrastructure, with a focus on intersections and complex interchanges. He discussed four ways to conduct research on infrastructure: laboratory, simulator, test track, and real world. Manser informed workshop participants how these tools can be used to analyze the relationship among driver, infrastructure, and the roadway. He identified ways to conduct infrastructure research during the presentation. The objectives of this presentation were to (1) introduce the range of methods and emerging technologies to obtain and analyze driver–infrastructure and roadway data, (2) discuss the data sources that are used in analyses, and (3) consider more effective ways to approach this type of data.

Research Environment and Capabilities

Manser informed workshop participants that the relationship between driver and infrastructure (e.g., signage and electronic billboards above the roadway) and between driver and roadway (e.g., roadway geometrics or striping) can be examined in four types of research environments: laboratory testing, driving simulation, test track, or real-world field observations. Research conducted in the laboratory included tests such as computer-based testing, surveys, and questionnaires. Manser noted that there is a continuum of fidelity across different simulators, ranging from desktop simulators to driving simulators; however, with the advances in technology they all have increasingly higher fidelity. Test tracks are closed-course facilities that can be highly controlled, and no other vehicles can impinge on the research protocol. Real-world research environments use vehicles with embedded data collection systems. These vehicles operate in largely uncontrolled environments on a prescribed course, route, or self-selected route, and researchers then mine the data.

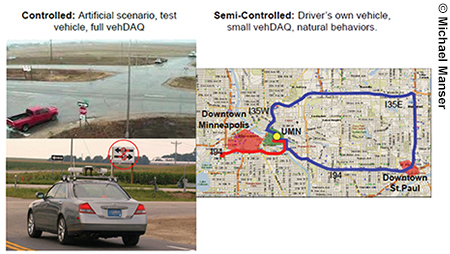

In the last decade, real-world field research environments have matured significantly because of the power of computing. Manser identified the advantages of two types of real-world research environments: on-road controlled and semi-controlled. An on-road controlled real-world research environment uses an artificial scenario and a test vehicle with an extensive vehicle data acquisition (vehDAQ) system. For example, drivers proceed through an intersection multiple times under different conditions in a highly controlled on-road research environment and only proceed to cross when instructed to do so (i.e., when there are specific types of traffic gaps or streams of gaps). The researchers are able to determine which gap a driver would normally take and which gap they would select in response to alternative intersection signs. In semi-controlled research settings, drivers operate a test vehicle or their own vehicle to exhibit natural driving behaviors, but the researchers retain control of where and when the driving occurs. A small vehDAQ system is installed in either vehicle, and drivers are instructed to follow a prescribed course at a prescribed time. This differs from naturalistic driving in which drivers choose their routes, timing, and sequence of driving, and therefore there is very little experimental control. Figure 9 shows examples of on-road controlled and semi-controlled studies conducted at the University of Minnesota.

Figure 9. Real-world research environments.

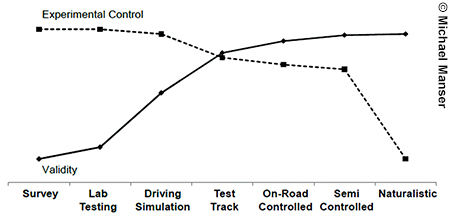

Validity and Experimental Control

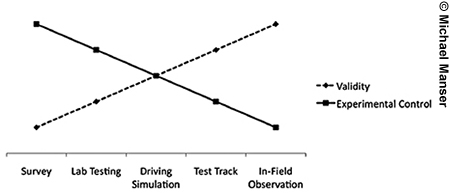

Different research environments result in tradeoffs between validity and experimental control. In terms of validity, researchers must ask whether they are measuring what they intend to measure. In terms of experimental control, researchers want to know how much control they have over the variables involved. To understand the driver and the infrastructure, certain variables of interest are measured, including the driver, vehicle, intersection characteristics, time of day, and weather. As illustrated in figure 10, there is a positive linear relationship in validity moving from left to right or from surveys to in-field observations. The opposite is expected to be true for experimental control. Here, it is assumed that experimental control will decrease systematically, with more experimental control in a survey environment compared with an in-field observation or real-world environment.

Figure 10. Expected research environments in terms of validity and experimental control.

Figure 11. Adjusted research environments in terms of validity and experimental control.

Although figure 10 shows what is expected, in terms of the relationship between validity and experimental control by research environment, Manser proposed an enhanced model. Although not yet scientifically proven, the model offers a more realistic way to conceptualize the relationship among the research environments. The enhanced model adds on-road controlled and semi-controlled environments in between test track and naturalistic research environments. As figure 11 illustrates, experimental control can be high in surveys, laboratory testing, and driving simulation where the driving scenarios can be controlled. With the advent of better technology on the test track and in the on-road controlled and semi-controlled environments, experimental control has become significantly higher. Therefore, instead of a linear decrease in experimental control through the range of testing environments, the level of experimental control can be maintained from surveys to semi-controlled environments and naturalistic studies. Although naturalistic studies can have more experimental control, it has not yet achieved the same level as the other environments. Likewise, although validity is lower in survey and laboratory testing environments, it can increase substantially in the on-road controlled and semi-controlled environments. For example, drivers can be placed into real-life driving scenarios and in traffic while experimenters maintain a substantial amount of experimental control by deciding where they are driving and when.

Selecting a Research Environment

The choice of research environment may depend on the product development phase; for example, in the case of a safety intervention, this may include signs, roadway geometrics, and striping. A study of gap perception in drivers would be better suited to a different research environment than would a study of a developed product or products. Researchers who studied a product concept might consider some laboratory testing to understand drivers' mental models and basic level of understanding when studying gap perception, in addition to how drivers select what gap or streams of gaps to accept. The use of a driving simulator can show if one product is better relative to another. As the product becomes more and more refined, it is important for researchers to move into naturalistic testing.

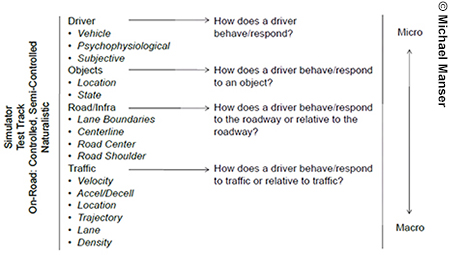

Identifying Data Sources

When looking at infrastructure-based as well as roadway-based safety solutions, there are four categories of data to consider: driver, objects, road or infrastructure, and traffic. Figure 12 illustrates the tools and environments in relation to these data categories. The driver data describes how the driver behaves and responds in terms of two variables. The first variable measures the primary control of the vehicle through acceleration, use of pedals and brakes, and steering. Physiological data is the second variable and measures driver reactions. For example, if researchers are interested in driver stress, then they can look at galvanic skin responses, or if they are interested in a faster-changing metric, then they can look at heart-rate variability or brain-wave activity.

Figure 12. Tools and environments in relation to data sources.

The second data category includes objects in the environment. This is important for infrastructure research because there is a need to know where an object is, such as an overhead sign or a roadside traffic sign, and how drivers respond to these alternative locations. Drivers may change their behavior appropriately based on the location of that sign, or they might ignore or miss it. Researchers who study ITS may need to look at the particular state of signs and how they influence driver behavior.

The third category includes road and infrastructure because it is important to examine how a driver behaves and responds to the roadway or relative to the roadway. Some of the data elements to capture include lane boundaries, centerlines, and road geometrics. Researchers can use these data to understand driver response to specific roadway elements.

The traffic category refers to sources of data that are difficult to control. Traffic encompasses many factors, such as velocity, acceleration or deceleration, location, trajectory, and lane and traffic density. These factors affect driver decisions in traffic, such as speeding, maintaining speed, or crossing intersections. It is a challenge to control these variables.

These data categories should be thought of macroscopically as well as in terms of microscopic driving behaviors, that is, how individual drivers react to something in the roadway. It is important to understand the aggregation of all the behavioral changes each driver makes and how they affect road transportation's efficiency and safety.

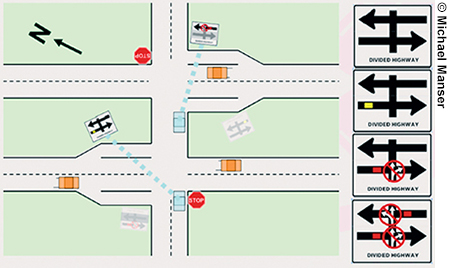

Cooperative Intersection Collision Avoidance System–Stop Sign Assist Project

In selecting a research environment to evaluate a safety measure, Manser informed the workshop participants of a cooperative intersection collision avoidance system–stop sign assist (CICAS-SSA) project in Minnesota, funded by FHWA. This project is one of many in the United States in which researchers are looking at different intersection technologies. The goal of the Minnesota research is to study gap-size rejection at particularly dangerous rural intersections in Minnesota. This intersection exhibits a higher crash rate than what would be predicted for this type of intersection. This intersection represents many intersections across the United States, and the researchers of this project are focused on determining what is problematic about the intersection and what can be done about it.

Manser told the workshop participants that the research team found that gap perception, that is the ability to determine an acceptable gap in traffic, is fairly poor for drivers. Based on previous research, the gap perception problem was considered to be the “root evil” of intersection crashes. This research began with laboratory studies in which the researchers presented several intersection concepts to participants, and the participants selected which sign they preferred, as shown in figure 13. By using the laboratory testing facilities, the researchers were able to narrow the field and conduct simulation testing with the more promising concepts. After evaluating their better sign concepts, the researchers moved to product evaluation and tested the best-rated sign in on-road controlled studies and semi-controlled studies. For the next step, the researchers have been performing an FOT funded by USDOT, which is underway to measure the effectiveness of a best-rated sign. This project provides an example of how researchers can take particular concepts and evaluate them early on, refine those concepts, take those concepts into the simulation studies, and eventually evaluate the best concept in FOTs.

Figure 13. Experimental signs used in laboratory study.

Infrastructure-Based Driver and Traffic Data

To test whether a sign has an effect on traffic, researchers used an instrumented vehicle equipped with a GPS on the car roof. The instrumented vehicle measured driver behavior and location of the vehicle within centimeters. The researchers of the CICAS-SSA study also set up a fully instrumented intersection, equipped with radar sensors on all legs, to monitor traffic approaching the intersection according to factors that included location, speed, and velocity. Test drivers operated the vehicle both when the sign was on and off. The researchers recorded variables, which included right, left, and crossing maneuvers, gap-safety margins, movement time and rejected gaps, eye-glance behavior toward sign and traffic, and subjective measures from random gap-simulation studies.

An interesting aspect that Manser highlighted for workshop participants was that, when these data are fed into the CICAS-SSA system, one can look at the interaction with drivers and the traffic. It was also possible to look at, dynamically and in real time, the gaps in front of or on the side of the driver, in addition to which gaps drivers reject in traffic. With the richness of these data, researchers were able to develop profiles of successful gap acceptance and gap rejections.

Research Challenges

On the basis of this research and knowledge of driver infrastructure and roadway data, Manser informed participants of the significant challenges that researchers face in the field. For example, increasing validity requires more complex research environments, which generate higher costs. Unlike driver simulation studies, on-road studies often require an instrumented vehicle and an instrumented intersection outfitted with sensors. Researchers must coordinate multiple streams of data into one, but analyzing multiple data streams is a long and intense process, which raises the cost. In addition, efforts to increase generalizability require increases in sample size, which also generates higher costs. There are also costs related to staff time needed in the field because of the number of staff required at one time to monitor the instruments, multidirectional traffic flows, and cue the test vehicle. Finally, improving the validity of research depends on larger samples and use of differential GPS, which produces more accurate data but at higher costs. The bottom line is that to improve validity, costs will increase.

Research Gaps

Manser informed the workshop participants that eye trackers have the potential to be a strong tool to examine the efficacy of new infrastructure and roadway-based systems; however, there are research gaps with eye trackers that limited their use for the study of driver–infrastructure roadway data. There are error rates that cumulate with distance, and most eye-tracking manufacturers claim that their eye trackers have about 3-degree accuracy. The result is that when drivers look at signs at about 61 m (200 ft), the subtended angle becomes 3 m (10 ft), which is a distance that is too great to determine accurately if a driver is looking at a sign.

Differential GPS keeps track of head position and has an error rate of several centimeters. There are also lag times between the eye tracker and data collection system that produces an error rate. The driver's head movement as he or she proceeds through the intersection also produces an error rate. All of these errors cumulate, reducing the accuracy of eye trackers for studying driver behavior in complex intersections. In summary, Manser noted that aspects of eye behavior that must be examined to study a specific infrastructure or roadway element include looking at the infrastructure or roadway element and the area around an infrastructure or roadway element.

New Tools for Accuracy

Manser also highlighted light detection and ranging (LIDAR) data as a promising new tool that portends to be useful for driver infrastructure and roadway data research. LIDAR is a scanning laser-based radar type system that is able to pick up objects, including cars, trees, pedestrians, and buildings, quickly and accurately. It offers tools to measure how drivers respond to objects in the environment and correlates driver behavior with environmental inputs in real time. One of the major challenges with the LIDAR data tool is that, although people can recognize the objects that the system is picking up as buildings, trees, or pedestrians, the computer sees them as zeros and ones. Therefore, the larger challenge is not collecting the data but understanding what the data means.

Manser told workshop participants that Google is one of the major innovators using this new tool and that it is implemented in the Google self-driving car. The Google car obtains information about its surroundings by using LIDAR data, along with other sensors. If researchers want to look at how drivers respond to objects in the environment that may be changed as part of an experimental study, researchers can begin to correlate how the drivers behave with the new objects in the environment in real time. Manser noted that there needs to be more effort and research to use LIDAR.

Discussion

In summary, participants raised questions about the challenges of using eye-tracking technologies and noted that use of these technologies depends on the research question under study. For example, if the goal is to measure a driver's attention to large signs that are close by, 3-degree accuracy is sufficient. Because analysis of eye-tracking data can be noisy, some researchers segment eye-tracking data into zones for ease of analysis. Although it is accepted that eye tracking is useful to study driver attention to features that are close by, it remains difficult to measure how drivers attend to objects in the distance.

Sampling rate can also be an issue when using eye trackers, as can individual differences, given that some people limit their scan to 1.5 degrees. Suggestions for analysis include looking at patterns as well as the accuracy of the data, which necessitates using good software able to pick up patterns. Manser noted that eye trackers are most effective in daylight but that this may not be a major constraint, because 90 percent of driving occurs during the day. Manser also noted that there are lower tech alternatives to eye tracking, such as video data, which can answer research questions including head position in relation to road activities and pedestrian movements.

Traffic Control Devices and In-Vehicle Systems

Dr. Susan Chrysler

National Advanced Driving Simulator, University of Iowa

Introduction

During this presentation, Dr. Susan Chrysler informed workshop participants how to find the most effective methods to address research needs based on target questions. Examples of research on traffic control devices (TCD) were used to demonstrate how a variety of methods could be applied to the same research question. The examples were drawn from materials prepared to educate traffic engineers on how to select effective evaluation methods for TCDs. The advantages and disadvantages of alternative research methods were also outlined, including focus groups and open and closed test courses.

Human Factors Research on Traffic Control Devices

TCDs include signs, pavement markings, and signals. FHWA's Manual on Uniform Traffic Control Devices (MUTCD) provides standards and specifications for the design and application of TCDs in the United States.9 Local and State governments use the MUTCD standards and guidelines to produce TCDs to manage their local road safety and traffic. Localities can modify options permitted in the MUTCD according to an FHWA process that requires evaluation of new candidate TCDs. Chrysler noted that TCD practices are selected for inclusion or modification based on data collected through experimentation and that the MUTCD provides extensive guidance about the review process to be used by State and local governments.

Chrysler informed workshop participants that, because of the importance of ensuring appropriate evaluation for new TCDs, State and local governments often conduct research to receive approval for their new TCD or new application of an existing TCD. The human factors research topics include visibility, legibility, comprehension, compliance, and preference. Chrysler highlighted that examining these topic areas, in relation to the specific application, will determine the effectiveness of TCDs to convey directions and warnings to drivers. For example, visibility human factors research topics include brightness, color, and shape aspects of signs, markings, and signals. In addition, legibility of signs is dependent on adequate font type, size, and proper color contrast in different road and environmental conditions. In addition, beyond visibility and legibility, TCDs need to be understood by drivers. Comprehension can be tested by using simple test methods within more complex methods involving traffic observations. Collecting preference data for TCDs identifies designs with which drivers are more comfortable compared with the other allowable options. This preference data, however, are not always predictive of driver behavior.

These considerations undergo a thorough examination to generate valid methods for evaluation. The FHWA publication, Pedestrian and Bicycle Traffic Control Device Evaluation Methods (Publication No. FHWA-HRT-11-035), recommends a variety of methods to evaluate TCDs for pedestrian and bicyclist TCDs. The methods presented in this report are applicable for the evaluation of any TCD.

Chrysler informed workshop participants of several key issues that can affect human factors data collection. The issues relate to data collection methods used to evaluate behavior as follows:

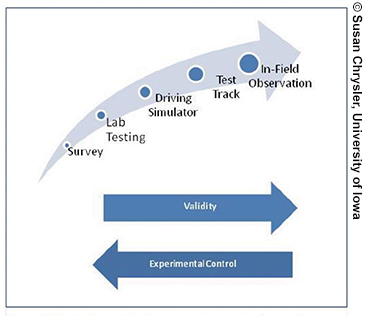

Validity and Experimental Control in Experimental Methods

Researchers must make trade-offs between validity (i.e., the ability of the study results to predict behavior on the road) and experimental control when selecting research methods. As figure 14 illustrates, validity is inversely proportional to experimental control. On one hand, utilizing in-field observation, for example, allows a “natural” observation with no influence by experimenters on decisions made by road users. On the other hand, this method does not allow for any control of traffic or weather, so not every subject is exposed to exactly the same conditions. Surveys and laboratory testing are examples of controlled experiments in which the validity of a participant's stated behavior is questioned because of desirable response bias, that is, in which everyone reports that they will comply with the device but may not in real life.

Figure 14. Trade-off between validity and experimental control.

Overview of Research Methods

Chrysler next gave workshop participants an overview of several research methods. These were categorized as surveys, focus groups, controlled experiments, and observational experiments.

Surveys

This category includes experiments in which researchers do not control the amount of time participants view the question. In addition, a survey may also allow for open-ended responses. In this context, laboratory tests in which researchers can control exposure time would not be considered part of the survey category. Survey methods may be considered either interactive (e.g., telephone surveys or intercept on-site) or non-interactive. Non-interactive survey methods include questionnaires that are conducted via mail or email, as well as self-paced questionnaires that use computer or paper.

Chrysler told workshop participants that, to measure what is intended, researchers need to ask the same question in different ways to verify the given answer. For example, instead of using a direct question that results in an open-ended response, questions can use illustrations or scenarios that provide a venue for subjects to demonstrate their understanding by indicating what action would be taken. This means that if people are asked, “What does a yellow line mean?” they may not be able to answer; however, when shown a photo of a one-way street with a yellow line, they can correctly identify the direction of travel, often without being able to identify why they answered in a particular way. Open-ended questions are time-consuming to code and summarize, so multiple choice or true-or-false questions may be preferred.

Focus Groups

In this category, groups of people are selected based on specific demographics or other characteristics to represent a target population. Evaluation that uses focus groups can occur during early phases of research and can be conducted at multiple locations. Chrysler noted that focus groups are particularly useful to narrow down the number of TCD alternatives that should be tested in subsequent studies that used more controlled methods. They are also helpful to gain insight into baseline driver understanding of a new traffic operation so that a new TCD can be designed to match that native understanding. Chrysler also cautioned that a dominating personality in the group may influence opinions.

Controlled Experiments

Laboratory Experiments of Comprehension

This tool is considered useful to analyze how well subjects comprehend signs, markings, and signals. Researchers can measure response time, accuracy, and limited viewing time of traffic signs. The use of a “button box” is suitable in these experiments because it provides a way to measure duration of viewing time, is easy to use, and is therefore accessible to much of the population. Button boxes are portable, can be connected to laptops, and allow experiments to be performed on a large scale.

Simulation

TCDs can also be analyzed by using driving simulators. For example, the influence of changeable message signs on a driver's decisions can be measured by using verbal questions under the task load of driving. It is not necessary to record trajectory measurements (i.e., speed and acceleration). After driving in the simulator, subjects can be asked about the instructions displayed on a changeable message sign.

Simulators can also reveal the dynamic aspects of behavior. Lane changing over time is an example of an observed behavior with dynamic aspects. The flexibility of using simulators to conduct experiments permits different starting lanes and driving environments and can reveal speed changes and errors that usually precede vehicle crashes. Another advantage of driving simulators is that they provide a detailed, cost-effective way to test multiple versions of TCDs or in-vehicle displays and warning systems.

Closed Course, Test Track, and Open Road

Closed-course test facilities are paved facilities that have availability for testing when not in use, such as unused fairgrounds or mothballed runways. In closed-course experiments, the test stimuli are actual roadways, and the closed courses use infrastructure (e.g., road intersections) as scenarios to perform evaluations. Test tracks are paved, dedicated runs without access to the outside world and generally have adjustable field instrumentation to measure vehicle performance parameters. Open-road testing refers to testing that uses roads that are in use or that may be temporarily closed for the test protocol. It is thought that drivers are under a more realistic attentional load when the study is conducted on an actual road.

Drivers can wear or use eye-tracking devices to monitor their visual behavior during testing on closed courses or test tracks. Eye-tracking methods measure the position, duration, and movement of the driver's eyes, which is a proxy for where they are looking. Researchers have noted that this is useful data to correlate with the vehicle inputs and behavior in response to cues and prompts outside the vehicle. Because there are technical limitations with day time use of eye-tracking devices, glance behavior needs to be hand coded from in-vehicle video cameras. Researchers often prefer test tracks over closed courses because they can accommodate more dangerous and higher speed scenarios.

Observational Experiments

Chrysler told workshop participants that observational methods require no direct contact with drivers. The measurements usually sought in observational experiments account for driving behavior—speed can be measured by using tubes or radar, and video data collection can be used to observe lane changing and compliance with signs and markings. Using existing cameras and live coding of traffic from a traffic management center (TMC) provides additional resources for researchers to evaluate specific conflicts. Test devices can be installed, or researchers can use existing ones. Regardless of what method is used, Chrysler noted that finding comparable sites for data collection is a challenge. For example, signs might work for specific road geometries but may not be appropriate for others.

Open-Road Drives

During the presentation, Chrysler informed workshop participants that some of the procedural limitations when performing open-road drives are the requirements involved when installing new signs or pavement markings. These experiments are preceded by a long process that involves obtaining permission to install devices, material fabrication, and installation. In addition, this method has risks associated with insurance coverage and liability for participants, researchers, and operators. It is more efficient to conduct these types of tests on a closed course where the experimenter has more latitude to make changes in the environment.

Another challenge noted when performing open-road research is the lack of experimental control. Participants may be able to identify the purpose of the research upon seeing the first test device. To compensate for the lack of control and to avoid order effects, it is necessary to alternate routes so that the first device is not the same for every subject. Limitations of being able to control different external factors, such as weather, traffic, and time of day, also make it difficult to equate or measure driving behavior across participants. Another issue to be aware of is possible vandalism and theft of signs and vehicle equipment.

Chrysler noted that data collected from on-road tests accurately reflect observed driving performance under realistic conditions (e.g., lighting, workload, and traffic). Experiments on the road are justified because of their greater acceptance and validity for practitioners—traffic engineers are often more convinced of a finding if it has been tested on the road. Researchers have to prove and demonstrate to the professional community that the use of test track, driving simulators, focus groups, and surveys to analyze behavior is valid.

On-road experiments can be tied to driving simulator experiments, for example, by using the same vehicle type in experimental simulations and then asking participants to drive the vehicle on road during the same day. The goal of having the same person during the same day using the same type of car is to predict their driving behavior. One example of this is eye-tracking studies during night conditions, which can be analyzed by using both research methods.

Comparison of Methods

Some of the factors considered important when comparing different sources were outlined as cost, time for study, experimental control, safety, diversity of sample, face validity, and the number of alternatives that can be tested. As figure 15 shows, there is no perfect method that can account for all aspects. It all depends on what the specific question is and the measure wanted.

Figure 15. Research methods.

One of the critical factors when choosing a research method is cost. Researchers select research methods that are most cost-effective and can answer the research question. Research methods that are simple to set up at a low cost are often the most difficult to score and to be used for comparison (e.g., traffic surveillance cameras). Research methods with automatic scoring are also difficult to set up (e.g., a properly constructed survey).

Research Needs

Chrysler highlighted several research needs for attention at the end of the presentation, as follows:

Discussion

Following the presentation, the workshop participants discussed several topics, as follows:

Additional Resources

Chrysler made available a selection of additional resources to workshop participants. These resources are outlined as follows:

Papers

Examples of Studies

Triangulating Data Sources to Understand Driver–Vehicle Behavior

Dr. John Lee

Department of Industrial and Systems Engineering, University of Wisconsin–Madison

Introduction

Dr. John Lee told workshop participants that combining different data sources through a strategy to triangulate methods provides new approaches to addressing research needs. Lee suggested that mixing on-road data with data collected from simulators, or from drivers themselves, provides a comprehensive approach that can compensate for the deficiencies of a particular method. Simulators provide meaningful parameters to take into account when comparing on-road driving behavior with simulator data. Lee presented a proof of concept study to workshop participants, which used existing infrastructure and data collected via social media, to triangulate multiple sources of data to investigate driving behavior.

Triangulating Data Sources to Understand Driver–Vehicle Behavior

Rather than picking the best method in an absolute sense (i.e., the most valid or selecting the best one for a particular problem), multiple methods can be combined to triangulate a problem. Lee told workshop participants that the proposed approach was to collect data with one method and combine them with other datasets to provide new insights.

Triangulation is a metaphor used in reference to position-fixing in navigation. First, a landmark is selected to establish a line of reference with which to compare the current position. When a second landmark is identified, a location is determined by using the point where both reference lines from the two landmarks intersect. In light of this metaphor, a driving simulator and on-road test can represent the landmarks to determine whether results converge. Ideally, a third line from a third landmark will also intersect at the same spot; however, in geographic applications in the real world, the third line typically does not meet the other two bearings because of errors, such as mapping a region instead of limiting it to where a possible position exists.

Lee mentioned that one of the critical considerations when choosing landmarks in navigation is to select from orthogonal, highly separated choices, located at right angles from the current position. Lee stated that when translating this concept into the research context, it is important to select maximally different methods of collecting data to address the same question. The challenge that comes with triangulation is coordinating and synthesizing observations that come from using different methods.

The challenges with triangulating data include logistical problems, sampling issues, philosophical aspects, costs, and modeling. During the presentation, these challenges were outlined as follows:

Research Approaches

Lee showed workshop participants three different approaches towards triangulating data. These included linking driving simulators to on-road behavior; determining ways to go beyond using existing infrastructure, such as loop detectors; and using drivers themselves as sensors of activity on the road.

Approach 1: Linking Simulators to On-Road Behavior

The first project that Lee presented was a large-scale public–private collaboration, involving the National Advanced Driving Simulator (NADS), Iowa State University, Montana State University, and SAIC, which received funding from the EAR Program. The purpose of this research project was to replicate actual road segments in different driving simulators. One of the benefits of this approach was to improve the usefulness of simulator data for understanding driver behavior. Different road segments that all road users find particularly challenging, such as roundabouts and gateways, were sampled and recreated in a simulated environment. Figure 16 shows the replication in simulation and its match to what is seen in the real world.

Figure 16. Comparison of real and simulated scenarios.

Researchers analyzed how simulated driving data corresponded to data collected on the road. The driving speed for each state in the different simulators, in terms of mean and standard deviation, was compared with data from driving through roundabouts collected from the real world. Ideally, the mean for speed in simulations will follow the mean for real-world data. Lee noted that this is the same for standard deviation in simulated scenarios, with data from actual driving in the roundabout. Comparisons across different simulators demonstrated good correspondence across the different roadway situations. One interesting outcome was that motion and visual complexity in a simulated environment had little effect on driving behavior, and similar results were obtained when motion was enabled or disabled.

Models for Transformation and Interpretation of Simulator Data

Lee examined the data by using two types of models, a linear regression and a generative process model. This was to understand how drivers actually negotiate a curve using a closed-loop model with perceptual cues, desired speed, and adjustments to speed.

Regression Model

Lee compared speed values across different roundabouts in simulators and in the real world. He noted that, ideally, simulators will have all data points lined up on the standardized diagonal, representing real-world conditions. Overall, results show a good correspondence between simulated and real-world data, indicating an absolute validity for the patterns observed.

Generative Model

The generative model uses data from a simulator, which provides for a deeper understanding of driving behavior in negotiating the curve in the roundabout. Lee noted that this model is a useful way to accumulate knowledge on driving performance based on a parameterized estimation of driving behavior. These simulator data were used to develop the driver model.

Lee informed workshop participants that harmonizing data resolution was one of the challenges of developing and validating this driver model. In this case, the data specifying the roadway were relatively coarse compared to the fine-grained resolution of the response process of the driver model. The data specifying the road curvature in the simulator were different than the information about roadway curvature portrayed on the simulator screen, because the textures used to create the visual scene tend to mask discontinuities in the underlying road database. This created a database resolution that is not compatible for the driver model. The discontinuity on road segments, as in the case of roundabouts where straight segments are followed by curves, introduced errors in the driver model that had to be addressed by smoothing the segments that comprised the roadway database.

Lessons Learned: Triangulating Data in Roadway Design

During the presentation, Lee proposed the following recommendations to improve roadway scenarios replicated in driving simulation:

Approach 2: Determining Ways to Go Beyond Loop Detectors for On-Road Data

Researchers for the second research project underway at the University of Wisconsin–Madison are collecting data beyond spot speed from loop detectors. They are analyzing intersection approaches by using on-road experiments and existing infrastructure to collect data at a relatively low cost. The researchers use existing infrastructure and radar sensors on traffic signals to capture trajectory data. Their objective is to identify vehicle trajectory (i.e., position and speed across time) and to compute the real-time safety performance measurements for vehicle movements. One of the applications of this research project could be the validation of data from simulators at very low cost by using existing and available data.

Approach 3: Using Drivers as Sensors

Drivers can be used as sensors in different ways to identify problems. By taking advantage of technology, drivers' surveillance may help researchers to identify problems and solutions. Two examples of how to do this include the safety complaints recorded in the National Highway Traffic Safety Administration (NHTSA) Vehicle Operator Questionnaire (VOQ) database and Twitter.

Safety Complaints

NHTSA compiles complaint data in an NHTSA database that contains drivers' entries on the NHTSA VOQs. These data can be downloaded at no cost and offer a low-cost tool to analyze problems with vehicles.10

Data can be analyzed by using a language-semantic-analysis, and the content can be analyzed by using cluster analysis. The results can be analyzed across time and offer a way to conduct surveillance by using drivers as sensors.

Twitter Data

Lee informed workshop participants that another way to use drivers as sensors to collect data is by looking at Twitter text to identify hot trends and compare Twitter data across place and time. A University of Wisconsin–Madison computer science research team used its socio-scope approach to extract the spatio-temporal signal from Twitter and create spatial-temporal maps to target pre-defined target phenomenon. Some issues to be aware of when using this type of data are population bias, imprecise location of phenomena, and low counts of events. The research team demonstrated this surveillance method through Twitter to evaluate the intensity of road-kill events across the continental United States.11

The proof of concept can be extended into different areas by using proper filters and text classifiers. This type of data can be obtained at a low cost. Workshop participants noted several interesting possibilities for Twitter data for future consideration. These could target the following areas:

Conclusion

In conclusion, Lee informed workshop participants that triangulation of data sources provides several research opportunities; however, the following challenges still remain:

Discussion

During the discussion following the presentation, Lee informed participants that the project results will be published soon. The discussion also raised several points relating to using Twitter data as a new surveillance method for driver–vehicle research. When asked about the general cost of a Twitter data-collection effort, such as the effort to look at road kill across the United States, Lee noted that the cost is in the range of tens of thousands of dollars.

Lee also told participants that computer technology is increasingly more powerful and less expensive, and therefore practical for research. Lee explained that the example that used Twitter data regarding species, time, and place of road kill was intended to be a proof of concept to illustrate the technique because the ground truth is known. In contrast, less is known about the characteristics of teenagers who tweet, and Twitter analyses may have potential to illuminate this issue. In response to a question regarding whether a generative model of curve negotiation is an example of modeling helping with triangulation, Lee noted that it explains why people behave differently with different simulators. In addition, the parameters that are estimated from the model can help researchers understand how to take into account on-road data.

Driver-Vehicle Data for Human Factors Research

Dr. Linda Ng Boyle

University of Washington

Introduction

During this presentation, Dr. Linda Ng Boyle recommended integrating methodologies rather than choosing a single methodology for human factors research on driver and vehicle data. Boyle informed workshop participants that there is immense value in considering different research and analytical tools. Multiple data sources can offer different but complimentary perspectives and can provide greater insights for a common research topic. Boyle showed workshop participants examples of how to complement data sources, looking specifically at in-vehicle driver support systems. The proposed framework integrates different data sources to understand driving behavior and safety outcomes, using the adaptive cruise control (ACC) in-vehicle driver support system as an example.

Adaptive Cruise Control

In-vehicle driver support systems provide traffic and other information to users with the purpose of improving traffic flow and enhancing driver comfort and safety. These systems are integrated into vehicles today and also exist as cloud-based systems that can be used with smart mobile devices.

ACC is an example of an embedded in-vehicle driver support system, where a driver can set the desired speed and distance from a lead vehicle.

Boyle noted, although ACC has been available in the United States since 2001, its safety benefits have not been fully assessed. Depending on the vehicle make and model, ACC may not necessarily work in stop-and-go traffic, and has limited ability to recognize a vehicle that has stopped directly in front or on a sharp, curvy road.

User Survey Data

To understand consumer's perceptions and actual use of ACC, Boyle's research team conducted a survey in Washington state between 2010 and 2011. In this study, ACC owners were asked about ACC's functional limitations. Of the surveys sent, 584 surveys were returned, and 118 were from actual ACC users. Many ACC users reported that the ACC system was helpful in stop-and-go traffic, on curved roads, and for recognizing stopped vehicles. Some users reported that they did not know whether or not ACC was successful in assisting them under these conditions. A similar survey was conducted in Iowa between 2008 and 2009, with 514 surveys returned and 132 of the surveys from actual ACC owners. Across both surveys, over 50 percent of drivers were not aware of the limitations of ACC, as indicated by the percent responding “Yes” or “Don't Know.”

During the presentation, Boyle highlighted that survey data can provide some insights on drivers' motivation, attitudes, and previous experiences; however, there are many other factors that can impact the overall safety of the driver, which need to be gathered from other data sources. Mediating factors are based on more subjective traits but may actually have a greater influence on the driver's behavior. These factors emerge from long-term exposure to a system, in conjunction with their other driving experiences, motivational factors, and driver limitations.

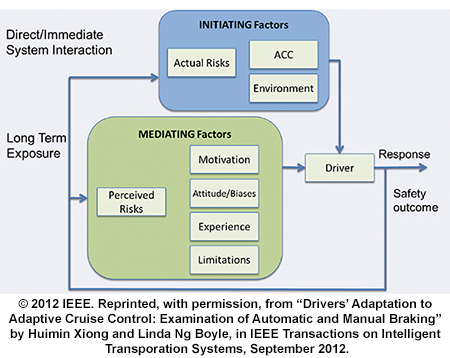

Initiating factors come from the direct interaction with the system, and the feedback that the driver receives at the moment the system is in use for any given road, traffic, and environmental situation. Both the mediating and initiating factors need to be considered to understand the safety implications of ACC and the impact this system has on the driver. Boyle noted that driver response can be observed in a naturalistic environment and tested in various conditions in a simulated environment. The system is a closed loop, as illustrated in figure 17, and changes in the initiating and mediating factors will impact the drivers' response for the next response or action that is taken by the driver.

Figure 17. Impacts on driver response when using adaptive cruise control.

Complementing Survey Data with Field Data

The data from the closed-loop system in figure 17 comes from a myriad of data sources to assess the overall safety impacts of the driver–ACC system interactions. Boyle reminded workshop participants that many mediating factors can be obtained through surveys; however, on-road or field data is better for capturing initiating factors.

Field Data

Boyle told workshop participants that the University of Michigan Transportation Research Institute (UMTRI) conducted a field operational test for novice ACC system users. The purpose of this study was to observe drivers using ACC in the real world. In accordance, investigators looked at three closing events with lead vehicle braking. A closing event lasts from the moment that the ACC's automatic braking control is activated until any braking (or deceleration) stops. The three categories included low risk, conflict, and near crash. The likelihood that a driver will intervene when ACC is on differed by age. More specifically, middle-aged drivers were more likely to intervene when compared with older drivers. Boyle noted that user settings were also related to the likelihood of intervention. Users that preferred long gap settings were less likely to intervene compared with those drivers that preferred short settings. The likelihood of driver interventions was also related to the roadway environment—drivers were less likely to intervene during highway driving.

Complementing Field Data with Simulation Data

Although the associations between driving behavior and ACC can be observed by using field data, Boyle suggested simulation data should also be considered to gain insights on situations not observed in the real world. Simulators can be used to focus on the relationships identified from the field data that may have the greatest safety impact. They make it possible to examine “what if” scenarios, as well as to more closely examine various driver characteristics in a variety of scenarios that may not be encountered by all ACC users. Boyle told workshop participants that the benefit of establishing a relationship between the outcomes from field data and simulation lies in the ability to identify factors that impact safety while using ACC.

Simulation Data

Driving simulators allow one to examine the use of ACC in controlled settings and for various driving scenarios that could be of safety concern to drivers. It can also be used to examine differences in novice and experienced users. In a simulation study conducted at NADS using cluster analysis, researchers tested measurements previously identified in the field data in a motion-based simulator. The research questions included:

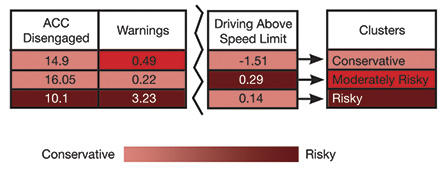

Figure 18 shows the outcomes of the cluster analysis, which grouped drivers into three categories: conservative, moderately risky, and risky drivers. The simulator data can make it possible to identify causal relationships among ACC owners and to help identify the different types of risk-seeking behavior by using the same driving performance measures in the same drive scenarios. On one hand, those who drove conservatively, for example, disengaged the ACC system quite often and drove below the speed limit. On the other hand, risky drivers received more warnings, disengaged the ACC system less often, and drove above the speed limit.

Figure 18. Cluster analysis using simulation data.

Note: ACC = adaptive cruise control.

Complementing Simulation Data with Survey Data

Boyle told workshop participants that simulation data may not be sufficient to explain all of the drivers' behavior. Survey methods can be used in conjunction with the simulator data to understand why some drivers had more risk propensity. Surveys can capture information on drivers' perception of ACC, willingness to use ACC, and drivers' understanding of ACC. Perceived risks, motivation, attitude or biases, experience, and limitations are all mediating factors that can be obtained from survey data.

Through the analysis of survey data, driving behavior can be classified according to risk propensity. For example, survey results showed that risky drivers tended to feel too comfortable trusting ACC and were easily distracted. Moderately risky drivers have the lowest level of trust in ACC and are confident with their driving skills. Finally, conservative drivers demonstrated the highest level of overall trust in the system and resembled cautious driving styles. Boyle noted that these findings demonstrate the value of using survey data to complement simulation data. Although simulation can be used to quantify the objective performance associated with risky behavior, surveys can extend the analysis by categorizing driving behavior according to the driver's confidence and trust in the ACC system.

Integration of Data Sources

During the presentation, Boyle showed that driving behavior can be measured by using field, simulation, and survey data. Field data measures actual behavior on the road. Behaviors identified in the field can then be manipulated in a simulated environment to observe and identify the factors that would influence a response. Survey data was used to understand drivers' motivations, perceptions, and preferences for ACC use. When the different perspectives are considered, the complete picture of safety outcomes is obtained; however, to understand how this information comes together, it is important to recognize that exposure to this technology will change driving behavior. An FOT methodology can provide insights on initial exposure, but drivers will behave differently after becoming accustomed to an ACC system. Similarly, Boyle noted that if an ACC system is embedded in a driver's personal vehicle for several years, a different driving behavior profile will result because of adaptation.

Adaptation and Road Safety

Boyle told workshop participants that a driver's behavior may differ given the length of time the driver is exposed to an ACC system. Using an ACC system for the first time is accompanied by a novelty effect that results in high performance. Over time, drivers' attitudes, expectations, and perceptions of the ACC system may change, which can impact the drivers' longer term use of ACC systems. Drivers can also experience positive and negative transitions when they switch to vehicles without ACC capabilities. Boyle suggested that behavioral adaptation may explain some of the variation among users and the differences in driving behavior among conservative and risky drivers.

Conclusion

Boyle discussed the importance of considering multiple sources to conduct human factors research in this presentation. As technology evolves, different systems are studied, and the need to find associations and causality persists. Different research methods can complement each other to understand outcomes. A research framework with a driver model delegates different information to different data sources. This type of framework enhances the value of each dataset, without ignoring the limitations of each method. When identifying differences, not only between tools but also within methods, it is possible to understand complementary aspects of data sources. For instance, there may be different results using the same tool in different labs. Different simulators produced different outcomes because of geographic variations; however, it is necessary to compare these outcome variations to validate findings and to identify individual differences. Finally, Boyle noted that triangulating data is crucial to obtain the complete picture of safety outcomes when using ACC systems or other in-vehicle systems.

Discussion

The workshop participants discussed several topics following the presentation. These are summarized below:

Acknowledgments

The work described in this presentation summary was supported by funding from the National Science Foundation (NSF, IIS Grant No. 1027609), National Highway Traffic Safety Administration (Contract No. DTNH 22-06-D-00043, Task Order 0005), with data collected at UMTRI for an FOT project on intelligent cruise control, and the University of Iowa National Advanced Driving Simulator (NADS). Graduate students David Dickie, Huimin Xiong, Jarrett Bato, and Yuqing Wu assisted with data collection and analysis. Any opinions, findings, and conclusions or recommendations expressed in this report are those of the presenter (L. Boyle) and do not necessarily reflect the views of NSF, USDOT, UMTRI, or the University of Iowa.

Additional Resources

Papers

1 European Road Safety Observatory (2012). Traffic Safety Basic Facts. Retrieved July 7, 2014, from http:// ec.europa.eu/transport/road_safety/pdf/statistics/ dacota/bfs20xx_dacota-swov-cyclists.pdf.

2 National Highway Traffic Safety Administration (2012). Bicyclists and Other Cyclists. Retrieved July 7, 2014, from http://www-nrd.nhtsa.dot.gov/Pubs/811624.pdf.

3 Klauer, S. G., Dingus, T. A., Neale, V. L., Sudweeks, J.D., Ramsey, D.J. (2006). The Impact of Driver Inattention on Near-Crash/Crash Risk: An Analysis Using the 100-Car Naturalistic Driving Study Data. Washington, DC: Department of Transportation, National Highway Traffic Safety Administration.

4 Olson, R.L., Hanowski, R.J., Hickman, J.S., & Bocanegra J. (2009). Driver Distraction in Commercial Vehicle Operations. Washington, DC: Department of Transportation, Federal Motor Carrier Safety Administration.